AI Governance in Practice

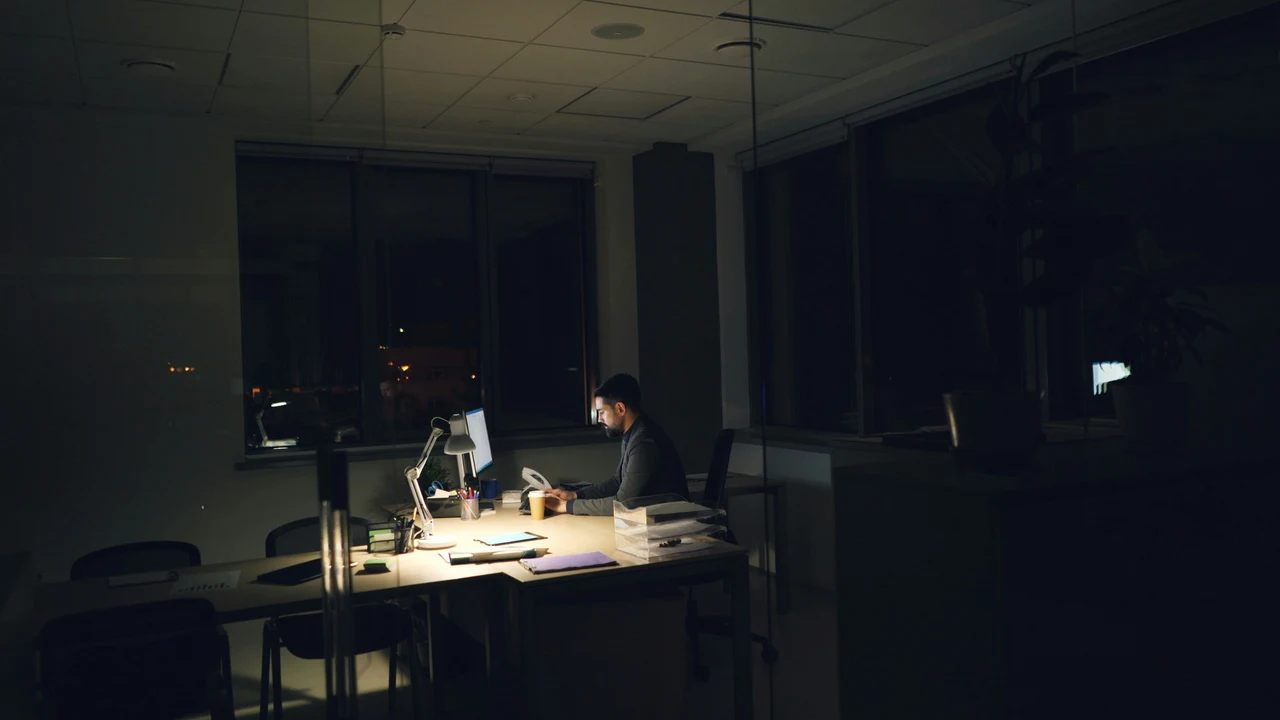

When automation becomes delegated authority

From Models to Decisions

Part of A Sceptic's Guide to AI.

Most AI governance focuses on the model. Its accuracy, its biases, its training data.

These matter. But they are not the hard problem.

The hard problem is what happens to the organisation around the model — how authority shifts, how oversight erodes, and how decisions that once required human judgement quietly become automated.

This page is not about model governance. It is about organisational governance: the behavioural shifts, authority transfers, and institutional blind spots that emerge when AI enters real decision-making. The model is rarely the weakest link. The organisation usually is.

From Automation to Delegation

There is a ladder of delegation that organisations climb, often without realising how far they have gone:

- Suggest. The system surfaces options. A human chooses. Authority remains with the person.

- Recommend. The system ranks options and highlights a preferred choice. The human still decides, but the framing has shifted.

- Decide. The system selects an action. The human reviews — in theory. In practice, review rates decline quickly.

- Execute. The system acts without waiting for approval. The human is notified after the fact, if at all.

- Self-correct. The system detects its own errors and adjusts. The human is no longer in the loop — the loop has closed around the machine.

Each step feels incremental. By the time you reach "execute," the organisation has handed over authority it may not have intended to delegate.

Companies often think they are buying productivity. In reality, they may be redesigning authority.

Behaviour Around the Model

The most underexamined governance risk is not what the model does. It is what people do differently once the model is present.

- People stop checking. When a system is right 95% of the time, review feels like wasted effort. Oversight decays precisely when the system is good enough to trust but not good enough to leave unsupervised.

- People defer. The model becomes the default. Disagreeing requires effort. Agreeing requires nothing. The path of least resistance reshapes the organisation's judgement.

- People hide behind the model. "The algorithm recommended it" becomes a shield against accountability.

- Weak decisions get laundered. A decision that would be challenged if a person made it passes without scrutiny when a system makes it.

The governance problem is often not "the model was wrong," but "the organisation changed its behaviour around the model."

Human-in-the-Loop Theatre

There is a spectrum of what "human in the loop" actually means:

- Human present. Someone is available to review outputs. In practice, they approve everything — the volume is too high, the interface discourages override, or they lack the context to evaluate.

- Human reviewing. Someone reads the output and makes a conscious judgement. This requires time, expertise, and organisational support for disagreement.

- Human governing. Someone has the authority, information, and incentive to challenge, override, or halt the system. This is rare.

Most organisations claiming human-in-the-loop oversight are at the first level. The human is present. The governance is not. This is AI theatre applied to oversight itself.

A human in the loop is not the same as human judgement in command.

Initiative Plus Access

The risk posed by an AI system is not primarily a function of how intelligent it is. It is a function of three things:

- Initiative — can it take actions on its own, or does it wait to be asked?

- Permissions — can it send messages, modify records, allocate resources, approve transactions?

- Access — what data and systems can it reach?

Operational risk is driven by what the system can do, not what it knows. A highly capable model that can only suggest is far less dangerous than a mediocre model that can execute.

Mediocre intelligence with initiative and permissions can be more dangerous than impressive intelligence without access.

Epistemic Hygiene

Governance is not only about what happens when a model is wrong. It is about what happens to the quality of reasoning when a model is available.

- Anchoring. When a model provides a first answer, subsequent review is anchored to it. The model does not just inform the decision — it frames it.

- Premature convergence. Teams reach conclusions faster because the AI provides an early synthesis. Speed that forecloses alternatives is not efficiency — it is a narrowing of thought.

- Confidence inheritance. AI outputs arrive without hesitation. Humans read fluency as certainty — even when the underlying evidence is thin.

- Disappearing dissent. It is socially easier to agree with a system than to challenge a colleague. The minority view stops being voiced not because it was wrong, but because the cost of disagreement increased.

Good governance does not just ask whether the model is accurate. It asks whether the organisation can still think clearly in the model's presence.

Organisational Deskilling

There is a governance risk that emerges slowly and is visible only in retrospect: the organisation loses the ability to do what the AI does for it.

- Skills atrophy. People who no longer perform a task stop being able to evaluate whether it was performed well.

- Institutional memory thins. The organisation retains the AI's output but loses the understanding that comes from doing the work.

- Recovery capacity degrades. If the system fails, the organisation may no longer have the capability to operate without it.

- Hiring shifts downstream. Roles get redefined around AI supervision. The people hired to oversee the system may never develop the judgement it was built to augment.

An organisation can become more productive in the short run and less competent in the long run — and only notice when the system fails.

Fast Wrong vs Slow Wrong

AI introduces two distinct failure modes. Governance frameworks tend to address only one.

- Fast wrong. The model produces an incorrect output — a hallucination, a misclassification, a bad recommendation. Visible. Catchable.

- Slow wrong. The organisation adapts to the model in ways that degrade decision quality without any single identifiable failure. Standards drift. "Good enough" shifts to match what the system produces rather than what the task requires.

Fast wrong is a technical problem. Slow wrong is an institutional one. Almost nobody monitors for the second.

- Are override rates declining because the model improved — or because people stopped looking?

- Are review times shrinking because reviewers are more efficient — or because they are rubber-stamping?

- Is the team still capable of producing the same quality output without the model?

The model that fails loudly is manageable. The model that succeeds quietly while the organisation forgets how to think is not.

The Verification Paradox

AI is most valuable precisely where verification is hardest. If a task is easy to check, the AI saves time but not judgement. The real value proposition is in tasks where verification is slow, expensive, or impractical:

- Messy summarisation. Checking the summary requires reading the originals — which defeats the purpose.

- Edge cases. Unusual inputs where both the model and the reviewer are operating at the limits of their competence.

- Cross-department workflows. Outputs spanning domains where no single person has the full context.

- Delayed feedback. Consequences visible only weeks or months later — forecasts, resource allocation, strategic recommendations.

The market pulls AI toward the places where verification is most difficult. The implications for trust decisions are significant: the quadrant where AI is most commercially attractive is the quadrant where oversight is hardest.

Decision-Centric Governance

Effective governance is decision-centric. It starts with the decision the AI is entering and works backward to the controls required.

Four questions define the governance posture:

- What decision is the AI entering? Not "what does the model do?" but "what human decision does this output shape or replace?"

- What is the cost of error? The answer determines the rigour of oversight required.

- Who owns the outcome? If nobody can answer this, governance has already failed.

- Can the decision be appealed? Can someone affected by the output have it reviewed by a human with the authority to override?

You do not govern AI well by asking what the model is. You govern it by asking what human decision it is entering.

Where Is AI Already Shaping Reality?

Before asking how to govern AI in theory, map where it is already shaping decisions in practice. Most organisations are further along the delegation ladder than they assumed.

If you only do one thing after reading this, do this. For any AI system currently in use, answer:

- Where on the delegation ladder is this system operating — suggest, recommend, decide, execute, or self-correct?

- Who last overrode the system's output? How long ago? What happened?

- If this system were switched off tomorrow, does the team have the skills and processes to perform the task manually?

- Who is accountable for the decisions this system shapes? Can they name the system and describe what it does?

- Has anyone affected by this system's outputs ever successfully challenged a decision?

If any of these questions cannot be answered — or if the answers are uncomfortable — governance is not a future project. It is an overdue one.

What Must Remain Contestable?

The ultimate test of AI governance is not whether the system performs well on average. It is whether its decisions can be challenged, reconstructed, and overturned.

- Can the decision be challenged? Not a feedback form — a genuine path to review.

- Can the decision be reconstructed? If the trail from input to output to action is opaque, accountability is impossible.

- Who can appeal? An appeal process that requires legal representation is not accessible. One buried in terms of service is not visible.

Judgement augmentation vs labour substitution

The distinction that matters most is not "AI vs human." It is whether AI augments human judgement or substitutes for it. Augmentation preserves the human as decision-maker. Substitution removes them.

Both are valid in the right context. But organisations routinely adopt AI for augmentation and end up with substitution — through convenience, cost pressure, and the slow erosion of oversight described throughout this page.

The question worth asking is not "can the model do this?" It is "should this decision remain contestable?" Those are different questions. The first is about capability. The second is about governance.

AI may not just automate decisions. It may reshape what an organisation is willing to call good judgement.

Common Questions

Is AI governance mainly about frameworks or behaviour?

Frameworks matter, but governance fails when it exists only on paper. The real test is whether an organisation's behaviour changes — whether people still check outputs, challenge recommendations, and maintain ownership of decisions. A governance framework that does not change behaviour is decoration.

What is the difference between automation and delegation?

Automation handles a task. Delegation hands over authority. The difference is whether the system is executing instructions or making judgements. When an AI moves from suggesting options to selecting them, the organisation has delegated authority — whether it intended to or not.

Is having a human in the loop enough?

Not necessarily. A human who is present but not empowered, informed, or given time to evaluate is not governing the process. Human-in-the-loop oversight only works when the human has the context, authority, and practical capacity to override the system.

What is the biggest governance risk with AI agents?

The combination of initiative and access. An AI system that can take actions — send messages, modify records, allocate resources — without meaningful review creates risk proportional to its permissions, not its intelligence. Mediocre intelligence with broad access can cause more damage than impressive intelligence without it.

What should leaders ask before deploying AI in decision-making?

Four questions: What decision is the AI entering? What is the cost if it gets that decision wrong? Who owns the outcome? And can the decision be appealed by someone affected by it? If any of those questions cannot be answered clearly, the deployment is not ready.

Further Reading

- A Sceptic's Guide to AI — the full framework for thinking clearly about AI

- When Should You Trust AI? — a structured method for calibrating trust in AI systems

- AI Theatre vs Real AI — how to tell whether AI capability is real or cosmetic

- AI Bias Examples — how useful AI systems can still produce unfair outcomes

I advise organisations on AI governance, deployment risk, and decision architecture. If your team is navigating these questions, get in touch.

Written by Dr Tristan Fletcher.

If you are deploying AI inside an organisation, this is the question to start with:

What decisions are we no longer able to challenge?