Relevance Vector Machines Explained

A Step-by-Step Introduction to Relevance Vector Machines

This tutorial paper has been written to make Tipping's Relevance Vector Machines (RVMs) as simple to understand as possible for those with minimal experience of Machine Learning. It assumes knowledge of probability in the areas of Bayes' theorem and Gaussian distributions including marginal and conditional Gaussian distributions. It also assumes familiarity with matrix differentiation, the vector representation of regression and kernel (basis) functions.

What Is a Relevance Vector Machine?

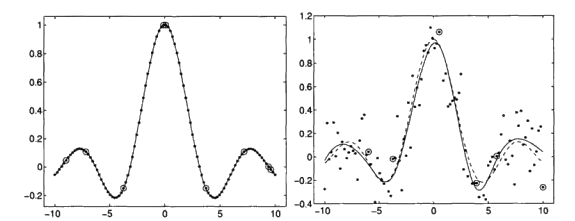

A Relevance Vector Machine (RVM) is a Bayesian sparse kernel method introduced by Michael Tipping in 2001. Like Support Vector Machines, RVMs use kernel functions to model non-linear relationships, but they take a fundamentally different approach: instead of finding maximum-margin hyperplanes, RVMs place a prior over the model weights and use Bayesian inference to determine which data points (the "relevance vectors") are most important for prediction.

The key advantage of RVMs over SVMs is sparsity — they typically use far fewer basis functions, producing faster predictions at test time. They also provide probabilistic outputs (calibrated uncertainty estimates), which SVMs do not naturally offer. The trade-off is that training an RVM can be more computationally expensive than training an SVM, and the solution is not guaranteed to be globally optimal.

What the Tutorial Covers

- Bayesian inference and the evidence framework

- How RVMs achieve sparsity compared to SVMs

- The relevance vector and automatic relevance determination

- Kernel functions and basis function selection

- Practical implementation considerations

Relevance Vector Machine vs Support Vector Machine

Both RVMs and SVMs are kernel-based methods for classification and regression, but they differ in important ways. SVMs minimise a regularised empirical risk and produce solutions defined by support vectors — data points that lie on or within the margin. RVMs instead maximise the marginal likelihood (type-II maximum likelihood) and prune irrelevant basis functions during training, yielding a much sparser model. Where an SVM might retain 30-50% of training points as support vectors, an RVM will typically use fewer than 5% as relevance vectors.

For a full introduction to SVMs, see the companion tutorial on Support Vector Machines Explained.

Relevance Vector Machines vs Gaussian Processes

Relevance Vector Machines and Gaussian Processes (GPs) are both Bayesian approaches to regression and classification that provide calibrated uncertainty estimates with each prediction. However, they differ significantly in how they achieve this. A Gaussian Process defines a distribution directly over functions and makes predictions by conditioning on the observed data, with computational cost that scales as O(n3) in the number of training points due to matrix inversion. RVMs, by contrast, place a prior over the model weights and use automatic relevance determination to prune the vast majority of basis functions during training — producing a sparse model that is much faster at test time.

In practice, GPs tend to give slightly better-calibrated uncertainty estimates on smooth problems, while RVMs excel where sparsity and fast prediction are valued — for instance in real-time applications or when the training set is large enough that full GP inference becomes prohibitive. Both methods require choosing a kernel function, though RVMs additionally learn which training points are "relevant" and discard the rest.

Download the full tutorial (PDF)

Why Are Relevance Vector Machines Sparse?

The sparsity of RVMs comes directly from their Bayesian treatment of the model weights. Each weight wi in the model is given its own precision (inverse variance) hyperparameter αi. During training, the evidence framework maximises the marginal likelihood — the probability of the data given the model, integrated over all possible weight values. This process, called automatic relevance determination (ARD), drives most of the αi values to infinity.

When a precision hyperparameter goes to infinity, the corresponding weight is forced to zero with certainty — the associated basis function (and the training point it represents) is effectively removed from the model. Only a small number of training points survive this pruning. These survivors are the relevance vectors, and they are typically far fewer than the support vectors retained by an SVM trained on the same data. Where an SVM might keep 30–50% of training points, an RVM often retains fewer than 5%.

This mechanism is what makes RVMs attractive for applications where fast prediction is important: fewer basis functions means fewer kernel evaluations at test time, leading to faster inference without sacrificing much accuracy.

RVM vs SVM vs Gaussian Process

| Method | What It Learns | Uncertainty | Sparsity | Typical Use Case | Main Trade-off |

|---|---|---|---|---|---|

| RVM | Sparse set of relevance vectors via Bayesian inference | Yes — full predictive distribution | Very high (typically <5% of training points) | Real-time prediction, embedded systems, probabilistic forecasting | Slower training; non-convex optimisation |

| SVM | Maximum-margin hyperplane defined by support vectors | No — deterministic outputs only | Moderate (30–50% of training points) | Classification with small-to-medium datasets | No uncertainty; less sparse than RVMs |

| Gaussian Process | Full posterior over functions conditioned on all data | Yes — well-calibrated posterior | None (uses all training points) | Small datasets where calibrated uncertainty matters | O(n³) training cost; does not scale to large datasets |

Frequently Asked Questions about Relevance Vector Machines

What is a relevance vector machine?

A Relevance Vector Machine (RVM) is a Bayesian sparse kernel method for classification and regression, introduced by Michael Tipping in 2001. It places a prior over model weights and uses the evidence framework to automatically prune irrelevant basis functions during training. The result is a sparse model defined by a small number of "relevance vectors" that provides probabilistic predictions with calibrated uncertainty estimates.

How is an RVM different from an SVM?

Both are kernel-based methods, but SVMs find a maximum-margin separating hyperplane using a frequentist approach, while RVMs use Bayesian inference to determine which data points (relevance vectors) contribute to the model. RVMs typically produce much sparser solutions and provide probabilistic predictions, whereas SVMs offer deterministic outputs with strong generalisation guarantees. See the full comparison in Support Vector Machines Explained.

Why are relevance vector machines sparse?

RVMs achieve sparsity through automatic relevance determination (ARD). Each weight has its own precision hyperparameter. During training, the evidence framework drives most precision values to infinity, forcing the corresponding weights to zero and removing those basis functions from the model. Only a small number of training points — the relevance vectors — survive this pruning, typically fewer than 5% of the training set.

Are relevance vector machines Bayesian?

Yes. RVMs are fundamentally Bayesian: they place a prior distribution over the model weights and use type-II maximum likelihood (empirical Bayes) to learn the hyperparameters. This Bayesian formulation is what enables both the automatic pruning of irrelevant basis functions and the calibrated uncertainty estimates that RVMs provide with each prediction.

When should you use an RVM?

RVMs are preferred when you need probabilistic outputs (confidence intervals on predictions), when test-time speed is critical (RVMs use far fewer basis functions), or when you want an automatic method for selecting model complexity. SVMs may be preferred when you need guaranteed convex optimisation or when training speed is the bottleneck.

Does scikit-learn support relevance vector machines?

No. Scikit-learn does not include a built-in RVM implementation. RVMs are available through third-party Python libraries such as scikit-rvm (skrvm), which provides RVC and RVR classes that follow the scikit-learn estimator API.

Are relevance vector machines used in practice?

Yes, though less commonly than SVMs or Gaussian Processes. RVMs have found applications in signal processing, geostatistics, medical image analysis and financial prediction. Their sparsity makes them particularly attractive for embedded systems or real-time applications where prediction latency matters.

What are the disadvantages of relevance vector machines?

The main drawbacks are: (1) training can be slower than SVMs because the evidence framework involves iterative re-estimation of hyperparameters; (2) the solution is not guaranteed to be globally optimal; and (3) the model can be sensitive to the choice of kernel and initialisation. Despite these limitations, RVMs remain a valuable tool in the Bayesian machine learning toolkit.

What is automatic relevance determination in RVMs?

Automatic relevance determination (ARD) is the mechanism by which an RVM decides which basis functions (and therefore which training points) are important. Each weight in the model has an individual precision hyperparameter. During training, the evidence framework drives many of these precisions to infinity, effectively setting the corresponding weights to zero and removing the associated data points from the model. The surviving points are the "relevance vectors".

Related Tutorials

- Support Vector Machines Explained — the frequentist counterpart to RVMs, covering hard/soft margins and kernels

- The Kalman Filter Explained — filtering and smoothing in Linear Dynamical Systems

Written by Dr Tristan Fletcher. Browse all ML tutorials.